AI models matter because different models excel at different things—some are faster and cheaper, some are better at deep reasoning, and some are built for multimodal inputs (text + images) or even real-time coding and image generation. Recently, OpenClaw has become a popular topic in the community, reflecting a broader shift toward making AI more practical and workflow-friendly.

In this guide, I’ll cover today’s most popular AI models (including LLMs and a leading image model), keep it practical, and update the list over time as new releases land.

The Most Popular AI Models You Cannot Miss

Instantly turn your content into mind maps with AI

Get Started NowGPT-5.4 Thinking / GPT-5.4 Pro (OpenAI)

Released date: Mar 5, 2026

GPT-5.4 is OpenAI’s newest frontier model designed for professional work, rolling out across ChatGPT (as GPT-5.4 Thinking), the API, and Codex. OpenAI also released GPT-5.4 Pro for maximum performance on complex tasks.

Main Features:

- More capable and efficient for professional work: Stronger performance across real “work outputs” like documents, spreadsheets, presentations, and tool-based workflows.

- Better reasoning + coding + agent workflows in one model: Incorporates frontier coding capability while improving how it works across tools and software environments.

- Two tiers for different needs: GPT-5.4 Thinking for most users; GPT-5.4 Pro for people who want maximum performance on complex tasks.

Best for: Deep analysis, professional knowledge work, long multi-step tasks, and workflows that combine reasoning + coding + tool use.

Nano Banana 2 (Google DeepMind)

Released date: Feb 26, 2026

Nano Banana 2 is Google DeepMind’s latest image model that combines the advanced capabilities of Nano Banana Pro with the speed of Gemini Flash—designed for faster iteration and high-quality image generation/editing across Google products.

Main Features:

- Pro-level capabilities at Flash speed: High-quality outputs with faster editing and iteration.

- Image generation + editing in one workflow: Designed for multi-turn creation and refinement.

- Production-friendly safety signals: Google highlights SynthID and content credentials improvements alongside the rollout.

Best for: Fast, high-quality image creation and editing—marketing visuals, creative iteration, and “generate + refine” workflows.

GPT-5.3-Codex-Spark (OpenAI)

Released date: Feb 12, 2026

GPT-5.3-Codex-Spark is OpenAI’s first model designed for real-time coding in Codex, optimized for near-instant interaction and rapid iteration (research preview).

Main Features:

- Real-time coding speed: Designed to feel near-instant, delivering extremely fast generation for interactive coding loops.

- Built for rapid iteration: Great for targeted edits, reshaping logic, refining interfaces, and quick “try → tweak → try again” cycles.

- Clear constraints (by design): Text-only with a 128K context window at launch, tuned to keep edits lightweight unless you ask for more.

Best for: Developers who want ultra-fast iteration: prototyping, quick fixes, interactive pair-programming, and high-frequency edit loops.

Gemini 3 Flash (Google)

Released date: Dec 17, 2025

Gemini 3 Flash is positioned as a fast, cost-effective “frontier intelligence” model designed for speed while keeping strong reasoning.

Main Features:

- Speed-first positioning (fast responses + “Thinking” mode for harder problems in the Gemini app).

- Multimodal support across text + other modalities (Google frames Gemini 3 as multimodal across products).

- Designed to reduce the tradeoff between “fast but dumb” vs “smart but slow.”

Best for: High-volume workflows: quick drafting, fast Q&A, lightweight reasoning, and “good enough” multimodal tasks.

Gemini 3 Pro (Google)

Released date: Nov 18, 2025

Gemini 3 Pro is Google’s most capable Gemini 3 model tier (reasoning + multimodal), positioned for advanced tasks.

Main Features:

- Advanced reasoning + multimodal positioning across Google products.

- Strong vision/document/spatial/video understanding (highlighted in the developer post).

- A “thinking” choice for harder math/code in Google’s model picker experiences.

Best for:

Harder tasks where quality matters: complex reasoning, doc/vision understanding, advanced math/code.

Claude Haiku 4.5 (Anthropic)

Released date: Oct 15, 2025

Claude Haiku 4.5 is Anthropic’s small/fast model tier, positioned for lower cost + high speed while retaining strong capability.

Main Features:

- Speed + cost focus (Anthropic frames it as cheaper and faster while staying strong).

- Good coding performance for a “small” model (Anthropic compares it favorably to earlier tiers).

- Practical for high-throughput usage where latency/budget matter.

Best for: Fast everyday tasks: short content, quick analysis, high-volume chat/support, lightweight coding help.

Claude Sonnet 4.5 (Anthropic)

Released date: Sep 29, 2025

Claude Sonnet 4.5 is positioned as a frontier model for coding/agents/computer use, with large gains in reasoning and math.

Main Features:

- Agent + computer-use strength (Anthropic emphasizes “using computers” capability).

- Coding leadership positioning (Anthropic claims top-tier coding performance).

- Stronger reasoning/math vs earlier Claude versions (per release post).

Best for: Serious building work: coding agents, longer tasks, complex reasoning, “operate tools/UI” workflows.

o3 (OpenAI)

Released date: Apr 16, 2025

OpenAI o3 is part of OpenAI’s o-series reasoning models trained to “think longer,” with emphasis on reasoning + tool use (including image reasoning).

Main Features:

- Reasoning-first orientation (o-series designed to think longer before responding).

- Tool use in ChatGPT and via API function calling.

- “Think with images” style visual reasoning (OpenAI highlights image manipulation during reasoning).

Best for: Hard reasoning tasks (math/logic/analysis) + workflows that benefit from deliberate thinking and tool usage.

Llama 4 (Meta)

Released date: Apr 5, 2025

Llama 4 is Meta’s open model family (Scout/Maverick mentioned in reporting), positioned as multimodal and open-source releases.

Main Features:

- Open(-weight) ecosystem friendliness (commonly used for self-hosting and customization).

- Mixture-of-Experts variants (Scout/Maverick described in model card materials).

- Multimodal direction discussed in coverage (text + other modalities).

Best for: Teams who want control: self-hosting, fine-tuning, custom pipelines, privacy/compliance, cost optimization.

Grok 3 (xAI)

Released date: Feb 19, 2025

Grok 3 is xAI’s flagship model, positioned around strong reasoning and broad knowledge (per xAI’s announcement).

Main Features:

- Flagship positioning as xAI’s most advanced model at the time.

- Reasoning emphasis (framed as “age of reasoning agents”).

- Strong general capability intended for assistant-like experiences.

Best for: General assistant workflows, especially if your team is already in the xAI/X ecosystem.

Qwen2.5-Max (Qwen / Alibaba)

Released date: Jan 28, 2025

Qwen2.5-Max is a large-scale MoE model from the Qwen family, available via Qwen Chat and API.

Main Features:

- Large-scale MoE positioning (Qwen frames it as a big intelligence jump).

- API availability (model name referenced in the post).

- Strong general capability for multilingual / enterprise-style usage (common adoption pattern).

Best for: Teams needing a strong alternative model option (especially for multilingual/global use cases).

DeepSeek-R1 (DeepSeek)

Released date: Jan 20, 2025

DeepSeek-R1 is a reasoning-focused model presented in an arXiv paper, emphasizing RL-based training for reasoning behavior.

Main Features:

- Reasoning-focused training via reinforcement learning.

- Research framing + open releases (paper mentions open-sourcing and distilled variants).

- Strong benchmark intent (paper positions it as competitive on reasoning tasks).

Best for: Reasoning-heavy experimentation, research workflows, and teams tracking open reasoning model progress.

Mistral Large 2 (Mistral)

Released date: Jul 24, 2024

Mistral Large 2 is Mistral’s flagship model generation aimed at stronger code, math, reasoning, multilingual support, and function calling.

Main Features:

- Improved code + math + reasoning vs the prior generation.

- Multilingual improvements (explicitly highlighted by Mistral).

- Function calling / tool integration focus.

Best for: A strong non-OpenAI/Google/Anthropic option for general enterprise + multilingual + tool workflows.

Comparison Table Among AI Models

| Model | Vendor | Released | Type | Multimodal | Key strengths |

|---|---|---|---|---|---|

| GPT-5.4 Thinking / Pro | OpenAI | 2026-03-05 | LLM | Yes | Professional work, stronger reasoning + coding + agent workflows |

| Nano Banana 2 | Google DeepMind | 2026-02-26 | Image model | Yes (image) | Pro-level image generation/editing with faster iteration |

| GPT-5.3-Codex-Spark | OpenAI | 2026-02-12 | LLM (coding-optimized) | No (text-only) | Real-time coding, ultra-fast iteration loops |

| Gemini 3 Flash | 2025-12-17 | LLM | Yes (varies by surface) | Fast + cost-effective, strong everyday reasoning | |

| Gemini 3 Pro | 2025-11-18 | LLM | Yes (varies by surface) | Advanced reasoning, strong vision/doc understanding | |

| Claude Haiku 4.5 | Anthropic | 2025-10-15 | LLM | Varies | Fast + cost-efficient, high throughput |

| Claude Sonnet 4.5 | Anthropic | 2025-09-29 | LLM | Varies | Strong reasoning + coding + agents/computer use |

| OpenAI o3 | OpenAI | 2025-04-16 | Reasoning model | Yes (varies) | Deep multi-step reasoning, tool workflows |

| Llama 4 | Meta | 2025-04-05 | Open LLM family | Yes (family) | Open ecosystem, customization, self-hosting flexibility |

| Grok 3 | xAI | 2025-02-19 | LLM | Varies | Reasoning-forward assistant workflows |

| Qwen2.5-Max | Alibaba Qwen | 2025-01-25 | LLM (MoE) | Varies | Large-scale MoE, strong alternative ecosystem |

| DeepSeek-R1 | DeepSeek | 2025-01-22 | Reasoning model | Text-first | RL-based reasoning approach, research-driven |

| Mistral Large 2 | Mistral | 2024-07-24 | LLM | Varies | Code/math/reasoning + multilingual + function calling |

How to Choose the Right Model

- If you care about “overall best” (quality + breadth): start with a flagship general model (GPT-5.2, Gemini 3 Pro).

- If speed and cost matter most: pick a fast tier (Gemini 3 Flash, Claude Haiku 4.5).

- If you’re building engineering agents: use coding-optimized models (GPT-5.2-Codex, Claude Sonnet 4.5).

- If your inputs are docs/screenshots/images: prioritize multimodal/vision strength (Gemini 3 Pro; o3 for image reasoning; Gemini 3 Pro Image for image creation/editing).

- If you need control (self-hosting / customization): open model families (Llama 4) are a common starting point.

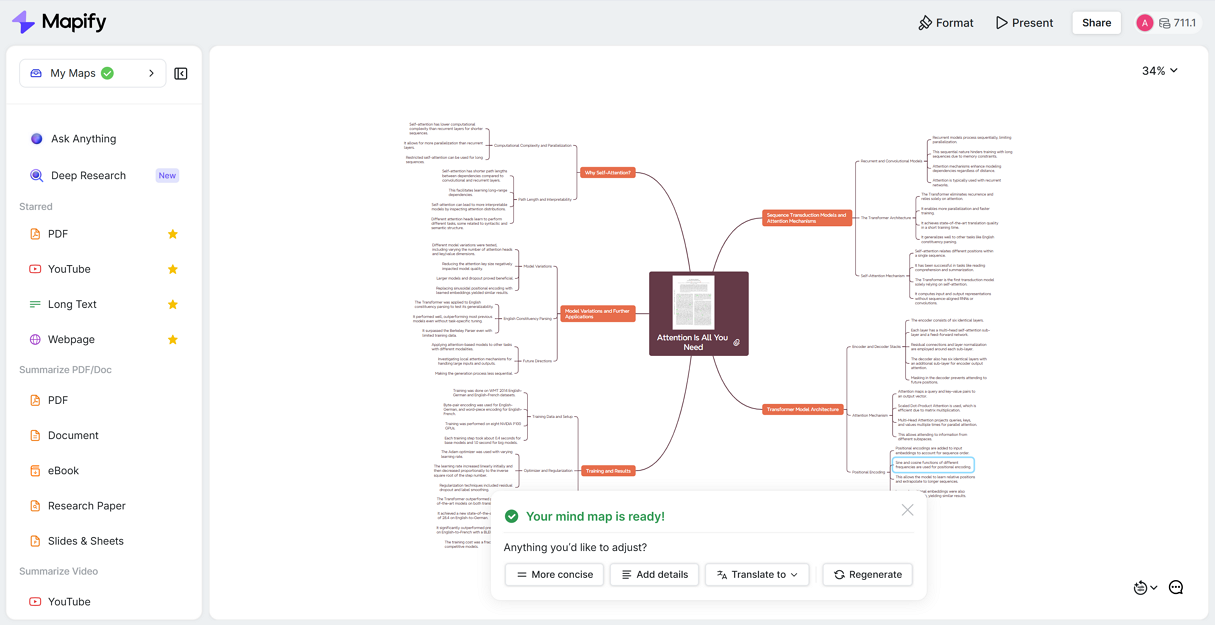

Mapify: AI Mind Mapping Tool

Mapify, the best free AI mind map summarizer, doesn’t require users to select an AI model within the product. It helps turn any content into structured mind maps instantly, with key points summarized. It also provides Ask Anything and Deep Research to start AI chatting or AI analyzing from one question.

Instantly turn your content into mind maps with AI

Get Started NowHere’s how that connects to real scenarios:

- YouTube transcripts to study mind map: Turn a transcript into: topics → subtopics → key examples → takeaways. Then refine a structure mind map in Mapify.

- Slides to presentation mind map: Convert slides into: section → key points → evidence → conclusion. Then reorganize the map into a clean narrative.

- PDF or research paper to argument mind map: Extract: research question → method → findings → limitations → implications. Then turn it into a navigable study/research map.

- Long text to chapter mind map: Create per-chapter outlines and merge them into one master map. Perfect for learning, writing, and content planning.

The win: models generate text; Mapify makes the structure something people actually keep, edit, and reuse.

FAQ

1) What’s the difference between “AI models” and “LLMs”?

AI models are the broader term. LLMs are a major subset focused on language (and often multimodal now).

2) Which AI model is best overall in 2026?

There isn’t one best for everyone — flagships usually win on quality, while fast tiers win on speed and cost.

3) Do I need a multimodal model?

If you work with PDFs, screenshots, charts, UI images, or slides, it helps a lot. If you only use clean text, it’s optional.

4) What’s the best model for summarizing long documents?

Choose models with strong long-context and reliable structure (flagship models are usually safest).

5) What’s the best model for coding?

For agentic coding and repo-scale tasks, GPT-5.2-Codex and Claude Sonnet 4.5 are common picks.

Final Thoughts

Picking the right AI model is less about chasing a single winner and more about matching the model to your workflow (speed vs depth, text vs multimodal, coding vs general). This post tracks the most popular options and will keep evolving as new releases arrive. Try Mapify for free for AI mind mapping and AI deep research.

Instantly turn your content into mind maps with AI

Get Started Now

.png)

.png)

.jpg)